Validity of Methods

Assessment methods and psychometric tests should have validity and reliability data and research to back up their claims that the test is a sound measure.

Reliability is a very important concept and works in tandem with Validity. A guiding principle for psychology is that a test can be reliable but not valid for a particular purpose, however, a test cannot be valid if it is unreliable. Read more about Reliability of testing methods

What is Validity?

Assessment methods including personality questionnaires, ability assessments, interviews, or any other assessment method are valid to the extent that the assessment method measures what it was designed to measure. There are 5 different aspects of validity some of which are more important than others:

The 3 Most Important Aspects of Validity

Predictive Validity

The most important validity to those interested in the usefulness of tests for predicting work-related outcomes is Predictive Validity (Criterion Related Validity).

Predictive validity is the extent to which a test or questionnaire predicts some future or desired outcome, for example work behaviour or on-the-job performance. This validity has obvious importance in personnel selection, recruitment and development.

Predictive validity is of particular interest to psychologists and HR professionals as it allows us to extrapolate the results of the test taken today to a meaningful outcome of what we want to know about the future behaviour of an employee.

Predictive validity is usually measured by the correlation between the test score and some appropriate criterion. The criterion could be performance on the job, training performance, counter-productive behaviours, manager ratings on competencies or any other outcome that can be measured.

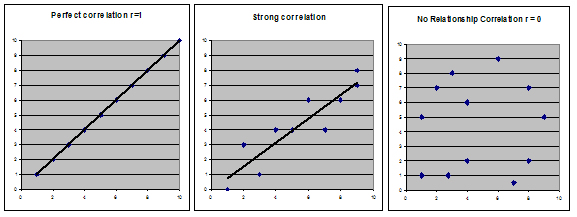

A validity coefficient (denoted by r) is a correlation between a test score and some criterion measure (such as performance).

A test may appreciably improve predictive efficiency if it shows any significant correlation with the criterion, however low. The correlation can be zero (random/no relationship) and goes to r = 1 perfect correlation.

The significance of the correlation is a measurement of the probability that the relationship between the two sets of data is due to chance as illustrated in the below graphs.

A larger pool of related data decreases the probability that a correlation is due to chance and therefore the cut-off for the level of a significant correlation coefficient r is usually less as the sample size increases.

Predictive Validity of Different Selection Methods

Schmidt, Oh & Shaffer (2016) analysed 100 years of research into the predictive validity of selection methods. What is interesting about this research is that the most predictive single measure is IQ (General Mental Ability - GMA). The combinations of methods with the best validity and utility for predicting job performance are an IQ test plus an integrity test (.78) or an IQ test plus a structured interview. (.76).

In addition, IQ (GMA) is also an excellent predictor of job-related learning at all job levels studied. The authors go on to state “GMA can be considered the primary personnel measure for hiring decisions, and we can consider the remaining 30 personnel measures as supplements to GMA measures”.

In their paper Comprehensive meta-analysis of integrity test validities by Ones, Viswesvaran & Schmit (1993) they found integrity tests predicted counterproductive work behaviours with a correlation of r = .58.

Construct Validity

Construct validity is the theoretical focus of validity and is the extent to which performance on the test fits into the theoretical scheme and research already established on the attribute or construct the test is trying to measure.

In essence, it is the extent to which a test fits into the wider research picture the more we are able to confer construct validity onto the assessment method or test.

Typically test makers research data from the same participants on a number of tests attempting to measure similar constructs.

Creating a picture of construct validity can take considerable time and complex statistical analysis such as factor analysis.

Concurrent Validity

Concurrent validity is the relationship between test scores and some criterion measure of job performance or training performance at the same time.

Both the test scores and the job aspect being measured being collected at the same time. This type of validity is usually used with internal employees and can be useful to assess skill status and future training requirements.

The 2 less relevant aspects of validity are:

Face Validity

Face validity of a test or method concerns the look and feel of the assessment items and whether an applicant can see any relevance of the test or assessment method to the job or role concerned.

Whilst a test with high face validity may make the person taking the test feel more comfortable with the test as it seems related to the job or role, it is not related at all to the test being a good measure or sound test.

High Face Validity does not in any way infer that the test is actually predictive of something useful, like on the job performance.

Unfortunately, some test makers push that high face validity is ideal, without pointing out the drawbacks of high face validity.

The drawback has a serious impact on the information you get from the individual and its usefulness - it makes the test or assessment much easier to fake or manipulate.

Content Validity

Content validity of a test is concerned with how well a test samples the behavioural domain it is trying to measure.

For example, should you want to measure general numerical ability and the test items were only multiplication equations, this would have poor content validity as the items are not representative of all the aspects that make up general numerical ability.

Often a detailed job analysis is required to establish content validity and this is something that is usually not available.

It also becomes more complicated for more complex constructs such as intelligence and self-esteem, as it is not easy to decide on the criteria that constitute content validity. So, like face validity, content validity is not usually the main focus of psychologists.